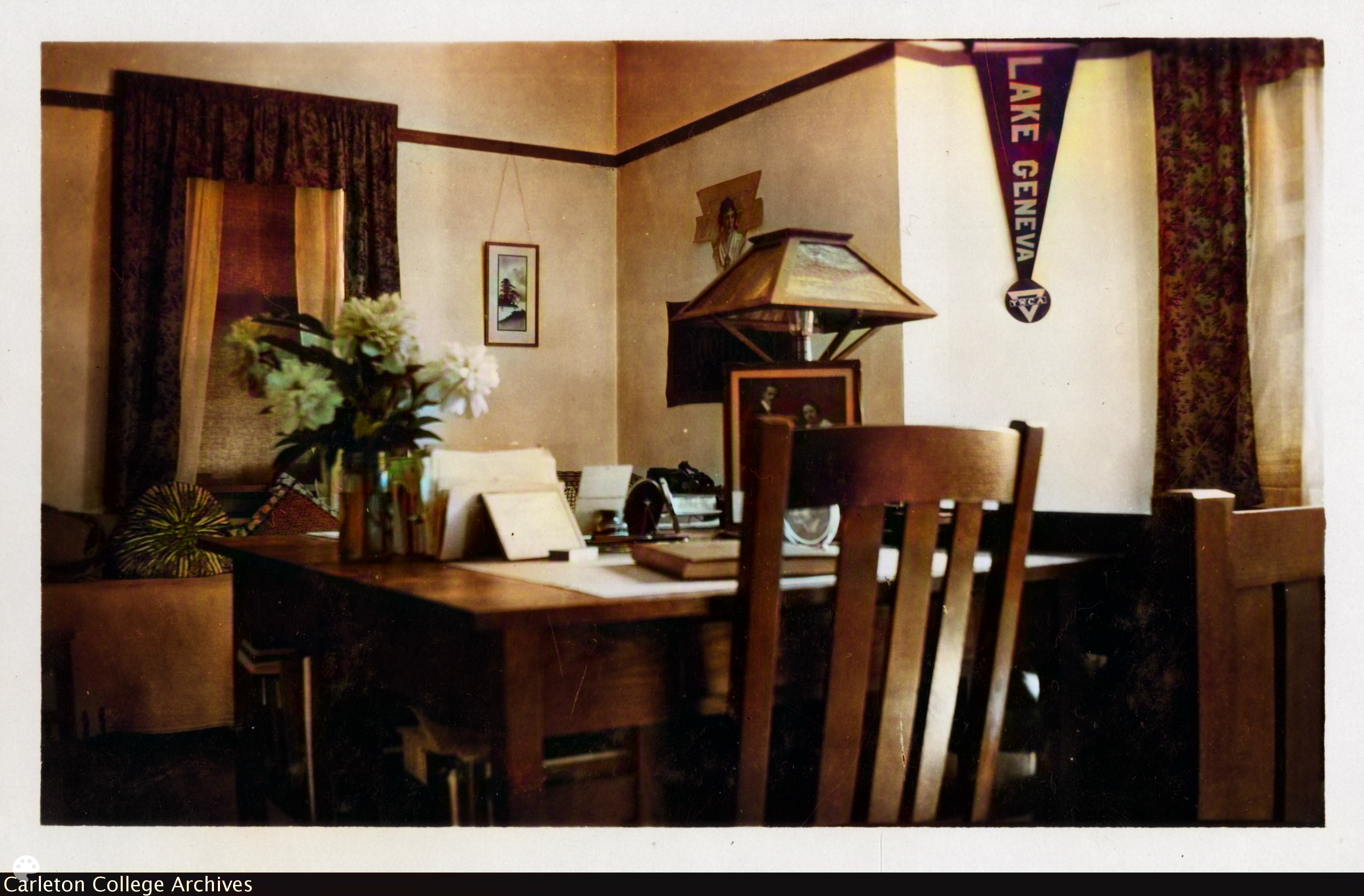

This week we were tasked to colorize and publish on Omeka a photograph of a Carleton interior that had been colorized with DeOldify, an artificial intelligence. I chose the picture “Interior of a girl’s room” from the Stork Collection. My own colorization raised some concerns about the ethics of AI and its uses in colorizing black and white images. The big one I had was about weighing the importance of reducing the perceived historical distance against the potential inaccuracies in recreation. My own photo, produced at the default 35 render factor, still has artifacts and issues. The flowers on the desk are weirdly green and the whole image seems to have gone from black and white to simply sepia. There is a distinct lack of any vibrancy to the image, even the floral pattern curtains appear to just be brown.

It is something brought up by both the art history article and the CRIME SCENES: Interbelluminterieurs in België project. The project says, in translation,

“The edited photos therefore do not give us absolute certainty about the actual colours.”

CRIME SCENES: Interbelluminterieurs in België

It’s this lack of certainty that irks me. These models training data, this black box, what biases do they contain? We saw an example in class of a black woman whose skin had been lightened in the DeOldify recreation compared to the original. It may not be so harmful in cases of things like interiors but what happens to people, to their lives, to how their world actually appeared? I think the art history article puts it best:

“Adding color does not show things as they were but recreates what is already a recreation – photograph – in our own image, now with computer science’s seal of approval.”

How AI is hijacking art history

I don’t know if the risk of misrepresentation is worth the reduced distance. I can imagine these photos in color just as easily in their original form as I can in the recreations. Maybe it’s worth it to the majority of people, but for me I can’t help but think that until models are able to be more accurate than now that it’s just not worth the potential harm.

I agree with your point that AI does not know the truth of the color but is instead predicting on training data. In this cases there is not much risk in a discolored output but as you pointed out we have seen cases where this can be problematic. I also agree that diminishing the perceived distance of the photos might not be a good step. I think that the original photos hold all the data possible and it is our job to interpret them in a modern context, not AI’s. While it can be useful in some cases I agree it is not worth the potential harm.